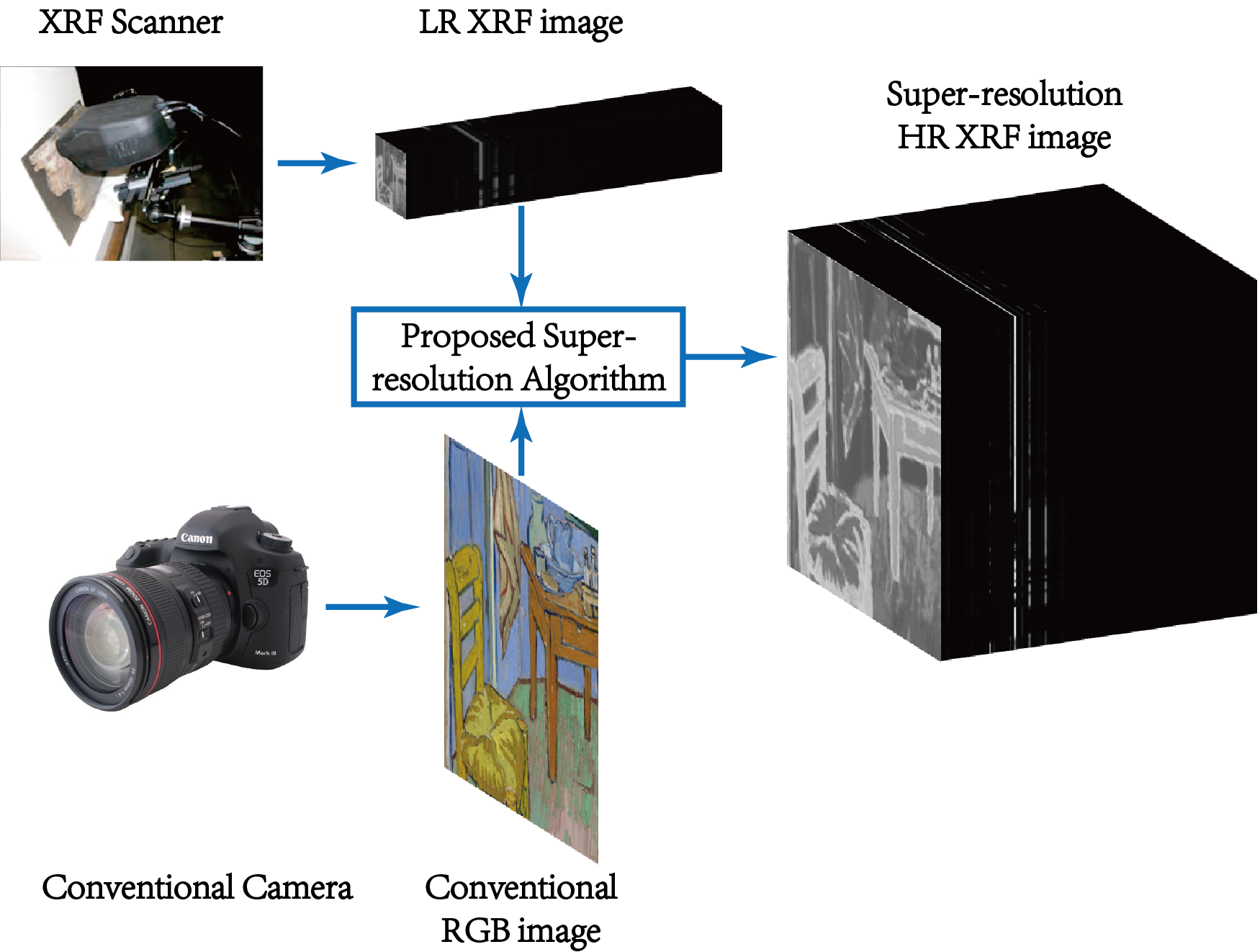

XRF images have high spectral resolution but low spatial resolution, whereas the opposite is true for conventional RGB images. The LR XRF image and the HR RGB image are fused to obtain an HR XRF image.

Project Description

X-Ray fluorescence (XRF) scanning of works of art is becoming an increasing popular non-destructive analytical method. The high quality XRF spectra is necessary to obtain significant information on both major and minor elements used for characterization and provenance analysis. However, there is a trade-off between the spatial resolution of an XRF scan and the Signal-to-Noise Ratio (SNR) of each pixel’s spectrum, due to the limited scanning time. In this project, we propose an XRF image super-resolution method to address this trade-off, thus obtaining a high spatial resolution XRF scan with high SNR. We fuse a low resolution XRF image and a conventional RGB highresolution image into a product of both high spatial and high spectral resolution XRF image. There is no gauruntee of a one to one mapping between XRF spectrum and RGB color since, for instance, paintings with hidden layers cannot be detected in visible but can in X-ray wavelengths. We separate the XRF image into the visible and non-visible components. The spatial resolution of the visible component is increased utilizing the high-resolution RGB image while the spatial resolution of the non-visible component is increased using a total variation superresolution method. Finally, the visible and non-visible components are combined to obtain the final result.

Publications

"X-ray fluorescence image super-resolution using dictionary learning"

Dai, Qiqin, Emeline Pouyet, Oliver Cossairt, Marc Walton, Francesca Casadio, and Aggelos Katsaggelos.

In Image, Video, and Multidimensional Signal Processing Workshop (IVMSP), 2016 IEEE 12th, pp. 1-5. IEEE, 2016.

[PDF]

"Spatial-Spectral Representation for X-Ray Fluorescence Image Super-Resolution"

Dai, Qiqin, Emeline Pouyet, Oliver Cossairt, Marc Walton, and Aggelos K. Katsaggelos.

IEEE Transactions on Computational Imaging 3, no. 3 (2017): 432-444.

[PDF]

Codes

TBA